In doing basic analysis on something such as well performance, an engineer can spend weeks merely collecting data related to the subsurface, operations, maintenance, and finance. Staff at ConocoPhillips, however, can bypass this time-consuming data curation process and begin performing analysis right off the bat thanks to a program that was years in the making.

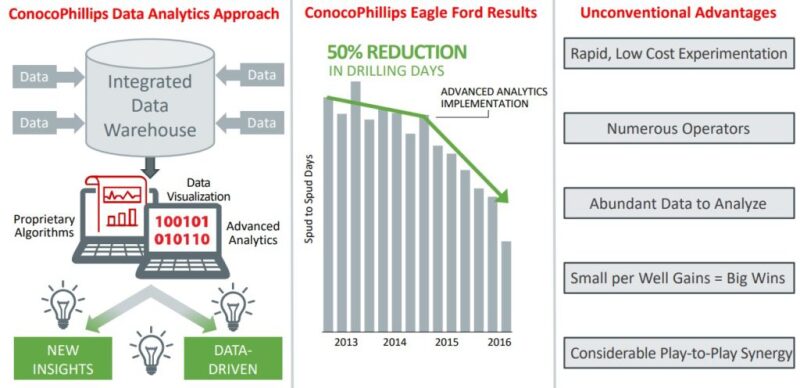

Recently scaled across its organization, the Houston independent’s integrated data warehouses (IDWs) serve as centralized data stores for staff involved in various disciplines including operations, production engineering, well construction, reservoir engineering, and geoscience. Deployment has resulted in improved well uptime, decreased drilling times, optimized completion designs, and increased understanding of subsurface characteristics, the company says.

The idea came about as the operator was collecting gobs of data from different rigs and different wells but had difficulty utilizing that data for interpretation—a common problem for companies in the early stages of analyzing big data. Instrumental to IDW implementation in Canada has been Patrick Stanley, data analytics lead in ConocoPhillips’ Canada business unit, who in 2016 helped establish a team, he said, “to act as a nucleus for data analytics and data integration” supporting the company’s Montney Shale business and the Surmont steam-assisted gravity drainage bitumen recovery facility.

Canada, Alaska, and Norway were ConocoPhillips’ first business units to consolidate data into a centralized data repository, an effort that dated back to the early 2000s and served as a precursor to the IDW. “These repositories were focused on enabling functional workflow such as production allocation or seismic analysis—less on cross-functional integration,” he explained.

The data repository approach was broadened into a full-fledged IDW in 2014 by the company’s Eagle Ford business, which incorporated data from all functions while simultaneously testing emerging commercial data warehouse technology. “The integration across functions has dramatically improved technical efficiency and time required to derive insight from the data,” Stanley said. “A user can pull business and technical data from the IDW directly into analytical tools such as Spotfire, minimizing what we call ‘personal data curation.’” Whereas, “The original data repository approach leveraged application-specific databases with front-end tools such as Microsoft Access.”

Stanley estimates that analytics exercises in his experience at ConocoPhillips traditionally consisted of 80-90% data access, integration, and cleaning and just 10-20% analysis. “We are successful when we can flip this to be 80-90% focused on analysis,” he said.

Seeing Results and Scaling

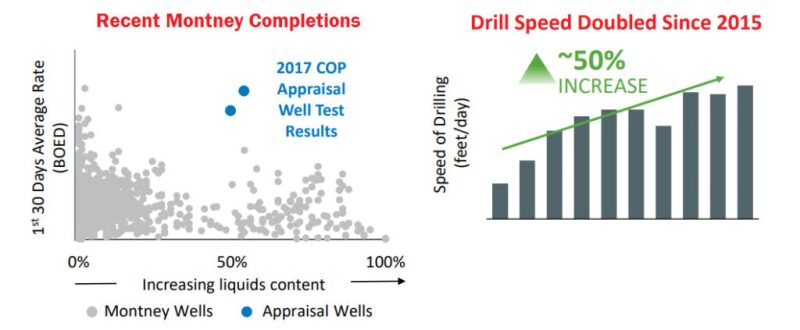

Expanding data analytics capabilities in the Eagle Ford has helped ConocoPhillips “drill 80% more wells per rig, recover 20% more hydrocarbons per well, and achieve an 8% increase in direct operating efficiency,” according to a company presentation. John Hand, ConocoPhillips’ technology program manager, noted during a recent SPE Gulf Coast Section forum that, thanks in large part to the company’s data capabilities, it’s shrinking its average drilling time per well in the Eagle Ford to 12 days from about a month. He believes the ability of operators to leverage big data is “going to be huge going forward because unconventionals are a big data problem.”

Following the Eagle Ford success, ConocoPhillips’ Canada, Alaska, and other Lower 48 business units in 2016 adopted the standardized IDW architecture and technology. The operator saw the need to advance a global strategy, which it kicked off in 2016 after seeing the cost of supply and lifting cost improvements enabled by integration of petrotechnical and business functional data.

Now, “All of ConocoPhillips’ business units are leveraging analytics, and we are currently updating our implementation plans to establish foundational aspects such as training, IDW architecture, and technology in those” units, he said. The company, which consists of six segments encompassing operations in 17 countries, now boasts more than 4,000 analytics practitioners, hundreds of proprietary applications, and 17 IDWs.

In effect, ConocoPhillips’ physics-based models are being augmented by emerging data science capabilities such as multivariate analysis and algorithm tools to develop optimization models, Stanley explained. “The combination of those two—the traditional [knowledge and methods] with the emerging analytics capabilities—is really what has given our reservoir engineering and development engineering teams the insight needed to continually tweak our completions design and drive greater recoveries from our unconventional wells,” Stanley said.

“So, through a rather iterative process that happened very quickly—over the course of 2 to 3 years—we’ve been able to reduce our cost of supply for our unconventionals by over [10%/year]. And, with [the company’s] 8 billion BOE of unconventional resources in North America, that’s a pretty significant achievement,” he said.

Who Handles the Data?

Integrating and making data transparent across multiple functions, however, “requires some governance to make sure we’re applying that data to the right case at the right time,” Stanley noted. “There’s financial information, there’s subsurface information, and there’s production information that, all out of context, could mislead you.

“So we’ve established a data governance process that has identified owners of the data—or functional owners across the business—who we bring together in this collaborative group to discuss the types of data that we’re storing in our warehouse, the access requirements for the data, the conditioning of it, and the various uses to make sure no one is being misled with the application of that data.”

Stanley said the Canadian team includes a former mud logger whose role has shifted from the drilling rig to the data room, where he is now in effect serving as a data analyst by leading the curation and integration of ConocoPhillips’ drilling data. Similar stories are also seen in the company’s facilities automation group. These de facto data analysts work with ConocoPhillips’ data architects in IT to construct and populate the IDW. They also work with the business function to ensure the data is being set up so that it can be accessed by the right people.

“We also have a number of engineers on the team with petrotechnical backgrounds such as production engineering or reservoir engineering who were personally interested in data science,” he said. “These are the individuals who were the first to build new tools for their peer groups … to develop their own workflows or enhance workflows for their day-to-day work.” These were “the first to use coding—for example, Python or R—to make their day-to-day work more efficient.”

Stanley’s group and others like his around the company therefore “have taken engineers and business professionals who have domain knowledge and shown interest in leveraging these emerging skills and tools” and turned them into “citizen data scientists,” he explained. A network has been set up in its business units so that aspiring citizen data scientists can receive training in coding and developing data models that augment their usual work, making the application “relatively straight forward to the user through the data warehouse.”

Then they can take on a capstone project relevant to ConocoPhillips’ business goals. During the entire process, prospective citizen data scientists are partnered with mentors who are senior subject matter experts in their domain as well as senior data scientists. People ask, “Is the engineer of the future a data scientist? And I would say, yes, but the data scientist of the future is not necessarily an engineer,” Stanley said.

Going Agnostic

While “data and analytical technology continues to evolve at a rapid pace and ConocoPhillips has been quick to test these” new solutions, the company is “more focused on creating a new skillset, a new core competency” for its engineering and petrotechnical staff, he said. When the company does select a technology vendor, it emphasizes flexibility and simplicity. “We look for data platforms that we can deploy globally that enable high-speed data processing—large data, big data processing—and that could be consumed around the world without having to duplicate the effort,” Stanley said.

Focusing on data integration and developing analytics competencies across the business has kept ConocoPhillips’ analytics program agnostic and therefore sustainable, with a common architecture used for its data globally, he said. “So we can change vendors, partners at will because we recognize those things will progress rapidly and we’ll grab onto whatever’s the most efficient from a cost and technology perspective.”

As for data ownership, Stanley noted the operator has “very structured contracts” with third parties such as drill site service providers that collect data relating to completions or downhole equipment. But ConocoPhillips does share data-related knowledge and strategies by participating in joint industry projects such as Canada’s Oil Sands Innovation Alliance, which aims to speed up the pace of environmental improvements in oil sands operations.